NAVAL

POSTGRADUATE

SCHOOL

MONTEREY, CALIFORNIA

THESIS

.

|

THIS PAGE INTENTIONALLY LEFT BLANK

NSN 7540�01�280�5500 Standard Form 298 (Rev. 2�89)

Prescribed by ANSI Std. 239�18

THIS PAGE INTENTIONALLY LEFT BLANK

.

OFFENSE-IN-DEPTH: AN ANALYSIS OF CYBER COERCION (excerpted)

Daniel R. Flemming

Lieutenant, United States Navy

B.S., United States Naval Academy, 2007

Submitted in partial fulfillment of the

requirements for the degree of

MASTER OF SCIENCE IN CYBER SYSTEMS AND OPERATIONS

from the

NAVAL POSTGRADUATE SCHOOL

March 2014

Author: Daniel R. Flemming

Approved by: Neil C. Rowe

Thesis Advisor

Wade L. Huntley

Second Reader

Cynthia E. Irvine

Chair, Cyber Academic Group

THIS PAGE INTENTIONALLY LEFT BLANK

ABSTRACT

The U.S. military needs to find alternative ways to exert influence abroad because of the current war-fatigued and budget-constrained environment. This paper explores whether cyber coercion should be considered. Cyber coercion can give the U.S. the ability to use phased aggression to compel adversaries to the bargaining table and avoid unnecessary escalation while expending fewer resources than alternative methods. With cyber as the instrument, users can flexibly tailor the attack with varying levels of force, attribution, and reversibility, depending on the situation. This paper first surveys the space of possible cyber coercion methods. Then, by analyzing a hypothetical scenario involving the U.S. and China, this paper attempts to examine specifics of how cyber coercion could be used. It finds that while there are major risks of abuse, and a smaller than expected window of applicability, the benefits of responsible use make cyber coercion worth adopting as a part of a larger strategic framework.

THIS PAGE INTENTIONALLY LEFT BLANK

TABLE OF CONTENTS

I. Introduction........................................................................................... 1

A. Background and Justification....................................... 1

B. Purpose............................................................................................. 2

C. Offense-in-depth Defined..................................................... 3

D. Department of Defense Applicability....................... 6

E. Outline............................................................................................. 8

II. coercion...................................................................................................... 9

A. Coercion Defined...................................................................... 9

B. Traditional Instruments.................................................. 12

C. Deterrence Defined.............................................................. 13

D. Compellance Defined.......................................................... 15

E. Role of Force in cyber coercion................................ 16

F. Actors in Cyber Coercion................................................. 18

G. additional variables for cyber coercion.......... 20

1. Costs and Investments............................................................ 20

2. Legality and Morality............................................................. 23

3. Reversibility............................................................................. 26

4. Attribution............................................................................... 28

5. Escalation................................................................................ 29

H. Counterargument and response to Cyber coercion........................................................................................ 32

I. Conclusion.................................................................................. 34

III. Methods of cyber coercion........................................................ 35

A. anatomy of a hack................................................................. 35

B. Intelligence collection.................................................. 37

C. Cyber attack methods....................................................... 38

1. Software Vulnerabilities......................................................... 39

2. Hardware Vulnerabilities....................................................... 44

3. Human Vulnerabilities........................................................... 45

D. Targeting for coercion.................................................... 46

1. Outcome................................................................................... 47

2. Goals........................................................................................ 47

E. Conclusion.................................................................................. 51

IV. Scenario.................................................................................................... 53

A. Introduction............................................................................. 53

B. Scenario Background......................................................... 54

1. Chinese.................................................................................... 54

2. Recent Events.......................................................................... 56

C. Scenario events....................................................................... 58

1. Targeting................................................................................. 59

2. Application of Force............................................................... 61

D. Analysis......................................................................................... 67

V. Conclusion.............................................................................................. 71

List of References......................................................................................... 75

Initial Distribution List............................................................................. 85

LIST OF FIGURES

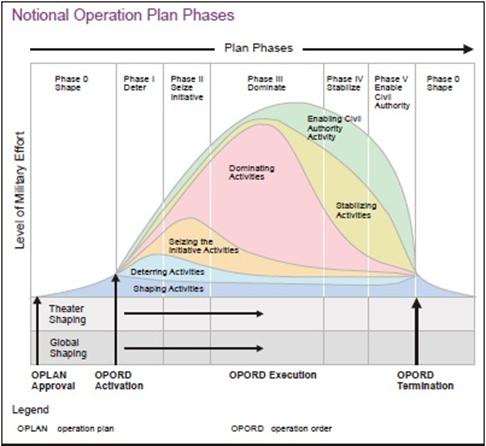

Figure 1. �Notional Operation Plan Phases� (from DOD, 2011b, p. III-39)......... 4

Figure 2. �The Coercion Dynamic and Policy� (from Hare, 2012, p. 131)......... 15

Figure 3. Conflict Spectrum (from Department of the Army, 2008, p. 2�1)...... 20

Figure 4. �U.S. Cyber Command Annual Budget� (from Fung, 2014).............. 22

Figure 5. �Anatomy of a Hack� (from McClure et al., 2012).............................. 36

Figure 6. Coercion Framework (from Byman & Waxman, 2002, p. 27)............. 47

Figure 7. Incursion and Deception (from SSG XXXI, 2013, p. 3-16)................ 64

Figure 8. �Possible Pathways into the Control System� (from Byres, 2012, p. 2)............................................................................................................... 66

THIS PAGE INTENTIONALLY LEFT BLANK

LIST OF TABLES

Table 1. Types of Threats (from Bratton, 2005, p. 100)..................................... 10

Table 2. Role of Force (from Bratton, 2005, p. 103)......................................... 10

Table 3. Actors (from Bratton, 2005, p. 108)..................................................... 11

Table 4. Definition of Success (from Bratton, 2005, p. 111)............................. 11

THIS PAGE INTENTIONALLY LEFT BLANK

LIST OF ACRONYMS AND ABBREVIATIONS

A2/AD anti-access/area-denial

ADIZ air-defense identification zone

AESA active electronically scanned array

ASEAN Association of Southeast Asian Nations

C2 command and control

C4ISR command, control, communications, computers, intelligence, surveillance, and reconnaissance

CNA computer network attack

CNO computer network operations

CNO Chief of Naval Operations

DDoS distributed denial-of-service

DNS domain name server

DOD Department of Defense

IADS integrated air-defense system

IP Internet protocol

IW information warfare

JOAC Joint Operational Access Concept

LOAC Law of Armed Conflict

MCS machinery-control systems

NATO North Atlantic Treaty Organization

NSA National Security Agency

OS operating system

PLA People�s Liberation Army

PLC programmable logic controller

SSG Strategic Studies Group

UAV unmanned aerial vehicle

UUV unmanned underwater vehicle

USB universal serial bus

USV unmanned surface vehicle

THIS PAGE INTENTIONALLY LEFT BLANK

ACKNOWLEDGMENTS

I would like to thank my wife, Jenny, for the love and support she has shown during my time at NPS. Furthermore, I would like to thank my advisor, Dr. Rowe, for his useful comments, remarks, and engagement throughout the thesis learning process. I would also like to thank Dr. Huntley for his assistance and guidance with this paper.

THIS PAGE INTENTIONALLY LEFT BLANK

You can play the stock market on-line. You can apply for a job on-line. You can shop for lingerie on-line. You can work on-line. You can learn on-line. You can borrow money on-line. You can engage in sexual activity on-line. You can barter on-line. You can buy and sell real estate on-line. You can purchase plane tickets on-line. You can gamble on-line. You can find long-lost friends on-line. You can be informed, enlightened, and entertained on-line. You can order pizza online. You can do your banking on-line. In some places, you can even vote online.

You can perform financial fraud on-line. You can steal secrets on-line. You can blackmail and extort on-line. You can trespass on-line. You can stalk on-line. You can vandalize someone�s property on-line. You can commit libel on-line. You can rob a bank on-line. You can frame someone on-line. You can engage in character assassination on-line. You can commit hate crimes on-line. You can sexually harass someone on-line. You can molest children on-line. You can ruin someone else�s credit on-line. You can disrupt commerce on-line. You can pillage and plunder on-line. You could incite to riot on-line. You could even start a war on-line. (Power, 2000, pp. 3�4)

As the opening quote illustrates, the Internet can be used for good and evil. To find the dark side, one need only turn on the news to find examples of how the cyber domain is being exploited. Symantec (2013) reported in its annual threat report that there was a 42% increase in targeted attacks from 2011 to 2012. Threats reportedly not only increased, but also expanded to include social media and mobile devices. These numbers are for civilian targets because reporting on military targets remains highly classified, but it is assumed that both networks share similar risks. The debate as to whether these intrusions constitute crimes, espionage, or attacks is ongoing. The bottom line is that the threat is chronic. Moreover, many nations have developed offensive and intelligence-collection cyber capabilities including China, Russia, India, Iran, and North Korea (Technolytics, 2010). Non-state actors, proxy nations, hacktivists, and even terrorist organizations also pose a threat. The question remains, what to do about it. Can the U.S. military use cyber force for good or at the very least to its own advantage? �The establishment of advanced information infrastructures allows the use of digital attacks based on micro applications of force to potentially have strategic influence� (Rattray, 2001, p. 463). We will explore that idea further in this thesis.

The simplest notion of coercion is threats to modify behavior; it is initiating an action that will stop only if an adversary complies. Coercion can include both deterrence and compellance. Cyber deterrence has not been very effective against intelligence gathering and theft since these types of intrusions continue to happen frequently. Therefore, this paper will look at the compellance side from the perspective of the United States. It can be used either in-kind to stop specific patterns of network intrusions or to alter an adversary�s behavior in general, expressly outside of U.S. networks. The vulnerability of civilian networks is reflected in the previous statistics. The U.S. military admittedly does not have a mission to protect civilians from their own careless security practices, which is what enables most of these attacks, and it is unlikely that coercion could even stop specific kinds of intrusions. Instead, it is more beneficial to look at cyber as the persuasive instrument in a larger strategic coercion framework to influence other nations abroad. This paper analyzes existing thinking to try to answer the primary question of: under what conditions is cyber coercion useful.

Coercion rarely results in total capitulation, but using cyber as the instrument of coercion to force an adversary to the bargaining table while avoiding more costly war is worth exploring. Does the U.S. want the cyber act to be nothing more than a wrist slap or should it carry the weight of an airstrike? Can it be used as a faster, short-term economic sanction? Can cyber acts be included amongst the existing political, economic, and kinetic instruments of coercion to show additional resolve, signal commitment, and bolster the threat? �The use of nuclear, conventional (specifically air power), and economic coercion in recent history parallels global transformations� and this paper believes that the use of cyber coercion will continue that trend as offensive cyber operations take an increased role in military operations (Byman & Waxman, 2002, p.17). Absent extensive use-case examples, our method will use a hypothetical scenario to test the utility of cyber coercion. It concludes that given two near-peer nations on the brink of war, the use of limited, attributable, and reversible acts of cyber coercion, coupled with the threat of subsequent traditional military operations, could potentially be not only useful but also de-escalatory. Adding cyber threats and the limited use of cyber force to the list of tools that can be utilized by the U.S. to coerce its adversaries is therefore desirable and should be explored in greater detail.

The U.S. currently has the capability to digitally exploit and attack its adversaries, arguably better than anyone in the world. This cyber skillset is primarily focused on gaining access to foreign networks to gather intelligence or as a force enabler to traditional kinetic operations. Based on recent trends, this paper anticipates the first volleys prior to war and specifically prior to kinetic strikes will most likely be through the cyber domain. This thesis then looks at another possible mission: to stop or modify an existing activity that the U.S. does not like�or to cause an adversary to start a whole new activity that is more beneficial to the U.S.�all from the cyber domain. This is something that the U.S. has not openly pursued on a large scale. The ideal would be having cyber coercion take place in the early stages of conflict to prevent additional hostilities. This is the idea of offense-in-depth. In much the same way that a layered defense offers the most protection, so too could there be a progression in offensive operations to be most effective. Aggression is typically phased, as nations do not jump right to nuclear weapons or total war at the outset of conflict. The purpose is to truly allow kinetic war to be a last resort as international law dictates. For instance, as seen in Figure 1, the U.S. currently uses a six-phase approach in the way it plans military operations (DOD, 2011b). In a similar fashion, this paper foresees coercive cyber acts being used at the outset of a conflict to provide an outlet for nation-states to resolve their difficulties and then move on. The use of additional force and more costly war remain options, but because of cyber coercion, hopefully avoidable ones. As the Chinese military theorist Sun Tzu famously wrote, �For to win one hundred victories in one hundred battles is not the acme of skill. To subdue the enemy without fighting is the acme of skill� (pp. 77�78).

Figure 1. �Notional Operation Plan Phases� (from DOD, 2011b, p. III-39).

Some precedence exists for this �offense-in-depth� idea. Prior to conducting the NATO-led air-strikes in Libya, a cyber attack was considered in the �hopes of bringing them down without a shot� (Sanger, 2012, p. 343). The reason a cyber attack was not conducted is two-fold, and most likely for the same reasons that plague decision makers today: the time needed to successfully employ it and the subsequent capability loss. To be successful and accurate in a cyber attack, extensive adversary infrastructure knowledge is required. This takes time to develop. The other issue is that a cyber attack can really only be used once before the vulnerability is patched and the attack effects nullified. Therefore, the capability to attack a specific vulnerability will be lost after launch until a new vulnerability is found and access to it subsequently established. Weighing the pros and cons of using cyber exploits will be situationally dependent. For Libya, other options that could achieve a similar goal were deemed more appropriate. This may not be the case in the future.

The situation leading up to Stuxnet, which targeted Iran�s nuclear production facility in Natanz, also personifies the concept of offense-in-depth. Sanger (2012) attributes Stuxnet, codenamed Olympic Games, to the United States and Israel. Bombing the Iranian nuclear facility at the outset, like the Israelis had done against Syria in 2007, was estimated to only cause a two-year delay because the Iranians were further along in the nuclear process. The strike might also cause the Iranians to emerge more unified and determined than before. Choosing to kinetically strike would harden their bunkers and their resolve, which would then require additional kinetic strikes in the future that would be undoubtedly less effective. The idea behind using a cyber attack at the outset was that Iran would probably not anticipate it, and if that attack failed, Iran could always be bombed later as originally planned. While Stuxnet is an act of sabotage and does not perfectly correlate to coercion, the ultimate objective and thought process can translate to future coercion applications. The fallout from Stuxnet may have played a role in forcing Iran to the bargaining table over its nuclear program. The accord reached in November 2013, put a temporary freeze on the Iranian nuclear program (Gordon, 2013). By adding offensive cyber capabilities to the foreign diplomacy toolbox, the U.S. can hopefully be better prepared and more effective in the manner in which it exerts influence abroad.

Operation Olympic Games demonstrated improved cyber capabilities, bolstered attack credibility, and even the weak attribution created an aura around U.S. cyberwarfare. This provides a unique advantage when approaching future conflicts and is something that can assist the effectiveness of coercion. Rather than jumping straight to dangerous and expensive kinetic operations or enacting slow economic sanctions, the U.S. has the opportunity to take advantage of the opportunities cyberwarfare can provide. Cyber operations can then become the first and hopefully last step or even the first of many steps when attempting to coerce adversaries and avoid more costly war. This paper analyzes the utility of taking advantage of such a concept and the increased possibilities it can provide to a larger coercion framework. In the end, cyber coercion is just one of many options the U.S. can use to exert influence; an option that will be useful in some instances and useless in others.

The concept of coercion is nothing new and should not be considered counter to military thought and practice. Freedman (1998) writes that, �military strategy in and of itself is defined as the use of force by one entity to impose its will on another� (p. 36). This, he continues, is nothing more than �organized coercion� on a massive scale located somewhere on a spectrum bookended by consent and total control (p. 36). In the current political landscape, �when confronted with a direct threat to American security, Obama has shown he is willing to act unilaterally�in a targeted, get in-and-out fashion, that avoids, at all costs, the kind of messy ground wars and lengthy occupation that have drained America�s treasury and spirit for the past decades� (Sanger, 2012, p. xiv). This is demonstrated by the bin Laden raid, escalating drone strikes, and Stuxnet. Cyber operations are one of the few areas to receive increased funding from the president as part of his �light-footprint� strategy (Sanger, 2012, p. 243; DOD, 2012a). As Commander-in-Chief, the president has proven his embrace of �hard, covert power� (Sanger, 2012, p. xvi). With a war-fatigued public and in a budget constrained environment, the use of cyber coercion can offer one way for the U.S. to continue to ensure military dominance around the world.

It is critical to plan for this type of warfare now, so it is available when time-sensitive targets arise. For instance, it took about eight months to put together the first plan of execution for Stuxnet. Overcoming air-gapped networks like the one attacked in Iran explains some of that time. The U.S. and Israel supposedly also took the extra time to make the malware as accurate and stealthy as possible to comply with the Law of Armed Conflict (LOAC). This is important because the worm caused physical damage and could be interpreted as a use of force; but by doing so, the creators were setting the standard for future applications. It is dangerous to have nations freely launching cyber munitions at each other for the slightest perceived fault. If the U.S. takes the lead, it can continue to lay the groundwork for future applications. Even if the U.S. never chooses to launch another cyber attack in the future, continuing to establish footholds in adversary networks can provide persistent intelligence gathering opportunities.

A military cyber coercion strategy is important because it has the potential of, �reducing the risks of war� and �limiting the costs of war that do occur� (Cimbala, 1998, p. 181). The end goal of a coercive act is to show the target the futility of its current strategy and induce concessions, not necessarily contribute directly to the military victory of the coercer (Freedman, 1998). It is not a demonstration of simple sabotage or brute force but rather attaching a message and meaning to the coercive act. �Even a potentially promising use of force can be squandered by an ineffectual diplomacy,� and the use of force tends to complicate, compromise, and harden attitudes (Freedman, 1998, p. 33). Therefore, any coercive strategy is going to be a balance or even a competition between the political and the military whether short of war or in war (Cimbala, 1998). The complexity of that relationship is reflected in the fact that cyber expertise resides in the military and the intelligence organizations, so the capabilities and use-case examples live in the classified world and are not readily accessible or well-known to the political leaders who will make the decisions to employ cyber force. For instance, Stuxnet ��was originally under military command until after President Obama settled into the Presidency� (Sanger, 2012, pp. 200�201).

It is likely that decision makers will second-guess the benefit of giving up a valuable cyber capability outside of an actual military conflict or covert intelligence collection operation to �maybe� coerce an adversary. The military simply cannot control every aspect of cyber use. Byman and Waxman (2002) argue that, �success in coercive contests seldom turns on superior firepower� (p. 229). Otherwise in recent years, the U.S. would rarely lose and almost always get what it wants. Problems will undoubtedly be political. Traditionally, military coercion has been weakened by leadership�s aversion to commit adequate amounts of force and to casualties. When cyber is the instrument however, it offers leaders the ability to limit or eliminate casualties, to act unilaterally, and to require a smaller military footprint.

A more looming question is whether the effect created by the cyber act can strike a balance between being strong enough to coerce and being so strong it escalates the conflict. Sometimes the most influential act would only invite retaliation. The U.S. could be one of the most vulnerable nations to cyber attacks, as evidenced by the statistics in the opening paragraph, so it must weigh the risks of retaliation prior to trying to compel adversaries through cyber coercion. The U.S. has also a fear of more dangerous scenarios lurking around the corner that it must save all of its cyber attacks for. It does make sense to avoid frequently launching attacks since that will send the wrong message to adversaries and increase the risk of retaliation. The answer to all international conflict problems cannot be found exclusively in the cyber domain, and cyber coercion might only be appropriate for a small subset of conflicts. The appropriate threshold for use has yet to be established, and the U.S. should take this opportunity to set precedence. Since the world is in the early stages of nation-states conducting offensive cyber operations with little regulation or few treaties, it is important for the U.S. to realize that other nations will not necessarily take the time to comply with international norms or use any type of judgment at all prior to launching their form of Stuxnet (Sanger, 2012).

The thesis is organized as follows. Chapter I introduces the justification and the purpose of the study. It also explains the meaning of the title term, offense-in-depth, and describes the relevance of the topic for the DOD. Chapter II defines coercion. It explores traditional instruments, many of the variables that will help determine successful implementation in a cyber application, and a counterargument to using cyber coercion. Chapter III researches examples of cyber attacks that might be employed and common mechanisms for achieving the desired level of influence over an adversary. Chapter IV analyzes the utility of using cyber coercion in a hypothetical scenario involving China and the U.S. Finally, the thesis concludes with a summary and recommended future work in Chapter V.

Cyberwarfare has the potential to change the way we fight the wars of the future�questions raised by these weapons need to be part of the public conversation. (Sanger, 2012, p. 270).

The ability to hurt gives a nation bargaining power, and coercion is one way a nation can choose to take advantage of that bargaining power (Schelling, 1966). Coercion at its core is the threat of more to come, so it is critical to understand what the enemy values to use it successfully. The end goal is to get the enemy not to proceed with its current course of action because it will be too painful or costly. Coercion does not always have to inflict costs; when compensation is the norm, coercion can also withhold benefits (Nye, 2011). Freedman (1998) argues that a nation�s interference in another�s affairs is denoted by a spectrum with extremes marked by consent and control. He continues that coercion, �lacks the legitimacy provided by consent or the certainty promised by control,� and worse if it fails would then require either a more aggressive approach to more closely resemble control or a more conciliatory approach that resembles consent (Freedman, 1998, p. 17).

The strategy of the actors and the attributes of the conflict environment are central to what determines the success of coercion. These variables will reflect each unique situation making coercion a complex issue to dissect and also hampers creating a prescriptive implementation. As Byman and Waxman (2002) put it, �writing on coercion requires modesty�the topic itself defies easy description� (p. 23). Definitions on the details of coercion vary depending on the author and term used, and this varying nature helps explain its previous failures. Even with sufficient understanding, the biggest difficulty is always in the application. This will be especially true when translating traditional coercion characteristics to the cyber domain. Bratton (2005) provides a nice summary highlighting the differences he found in what experts define as coercion. He divided coercion into four main categories found in Tables 1�4: the types of threats, the role of force, the actors, and the definition of success.

|

What types of threats are involved in coercion? |

Authors who concur |

|

Only compellent threats (i.e., coercion is different from deterrence) |

Alexander George, Janice Gross Stein, Robert Pape |

|

Both compellent and deterrent threats (deterrence and compellance are both types of coercion) |

Thomas Schelling, Daniel Ellsberg, Wallace Thies, Lawrence Freedman, Daniel Byman, and Matthew Waxman |

Table 1. Types of Threats (from Bratton, 2005, p. 100).

|

What role does force play in coercion? |

Authors who concur |

|

Coercion before the use of force |

George, Gross Stein |

|

Coercion only through force |

Pape |

|

Coercion through diplomacy and force |

Schelling, Thies |

Table 2. Role of Force (from Bratton, 2005, p. 103).

|

Who are the actors? |

Authors who concur |

|

Best thought of as identical, unitary, rational calculating actors |

Schelling, George, Pape,* and Daniel Drezner |

|

Rational actors that can be somewhat different (democracies vs. authoritarian governments) or are made up of a few simple parts (government, military, public, etc.) |

Pape,* Risa Brooks, Byman and Waxman |

|

Complex governments that both threaten and respond to threats differently |

Thies, David Auerswald |

Table 3. Actors (from Bratton, 2005, p. 108).[*]

|

How is success defined? |

Authors who concur |

|

Full compliance with coercer�s demands, independent of any other factors |

Pape |

|

Need to distinguish degrees of success. Possible to have partial success or secure secondary objectives without securing primary objectives |

Kimberly Elliot, Drezner, Karl Mueller, and Byman and Waxman |

Table 4. Definition of Success (from Bratton, 2005, p. 111).

This thesis follows most closely to Schelling�s definition of coercion. This includes coercion that involves both compellance and deterrence. Compellance causes an adversary to stop an action while deterrence causes an adversary not to take a certain action. This paper focuses on the compellance aspect of coercion and thus both words will be used interchangeably, but the codependence of both compellance and deterrence in the larger coercion discussion is fully recognized. Coercion will include limited force but with a caveat. That is, in the successful coercion of an adversary, the target should retain the ability to use violence yet choose not to employ it (Byman &Waxman, 2002). It is not violence for the sake of violence. This is the difference between sabotage and coercion. Coercion is conducted between nation-states, although the specific target can vary within that nation i.e., the military, the government, etc. And lastly, coercion is a dynamic process that has degrees of success that will vary based on the different situations it is used in. For instance, forcing an adversary to the bargaining table, while not a complete victory, can still be viewed as a valuable accomplishment especially if the alternative is more costly war. Schelling�s framework primarily deals with the nuclear age, and although there are similarities with cyber weapons, Libicki (2011) correctly points out that treating them the same can be misleading. This chapter hopes to show that Schelling�s conceptual framing of coercion has broader resonance by beginning with a description of traditional coercion and then transitioning to many of the unique characteristics of cyber coercion.

The most common non-lethal, non-military coercive instruments are economic sanctions and diplomatic demarches. Sanctions attempt to create broad discontent within the targeted nation while also weakening the financial assets of the power base (Byman & Waxman, 2002). Sanctions are a low cost option that often requires the help of other countries to be most effective. Due to the U.S.�s powerful economy, it has been able to enact them unilaterally in the past, although this ability is arguably dwindling. Since most crises lack urgency, sanctions are a way to act decisively without escalating. They are easier to undermine internally by third parties however and can also bring humanitarian issues. Sanctions are difficult to specifically target and so have had mixed results in the past. For instance, Syria is currently subjugated to a wide range of U.S. sanctions, which has clearly been unable to stop the ongoing civil war, whereas international sanctions appear to be working against Iran (Kessler, 2011). Diplomatic demarches are another frequently used instrument of coercion. Byman and Waxman (2002) write that this instrument �carries the lowest cost, demands little, and carries little risk� (p. 115). Diplomatic demarches are an attempt at political isolation, but when used alone their impact can be negligible. Demarches are tactics governments use when they want to send a message to another government with as little risk as possible. Demarches are an attempt to try to influence the power base�s perception of its international opinion. The problem is that most countries simply do not care.

Non-lethal, military instruments of coercion include the threat of conventional and nuclear retaliation. Conventional threats often require a use of force to be taken credibly, which the coercer may be unable to follow-through on. Even when the coercer possesses a clear superiority, �it often lacks the ability or will to apply that strength and inflict costs� (Byman & Waxman, 2002, p. 19). Conventional examples provided in the U.N. Charter include �demonstrations, blockade, and other operations by air, sea, or land forces� (U.N., 1945, 7:42). The last phrase provides important use of force exemptions that embrace military coercion as a way to settle disputes and recognizes an implicit concept of limited war (Lango, 2006). Conventional military coercion is the back and forth between the military plans of the coercer and the target (Pape, 1992). The back and forth nature stresses the importance of strategy; the adversary still gets a vote in coercion. This is best demonstrated in the Cold War when �small-scale wars and subversion and counter-subversion waged through local proxies became a common mode of superpower conflict� (Waxman, 2011, p. 441).

The idea behind nuclear threats is that their use punishes the society with more pain than it could possibly stand, �so much so that battlefield outcomes become unimportant by comparison� (Pape, 1992, p. 464). Nuclear compellance has questionable effectiveness because it is unlikely a rational leader would ever actually use nuclear weapons if the target nation called the bluff. At the same time, nuclear deterrence has helped avoid the start of direct interstate conventional military action between major powers since 1945. Military coercion can also include lethal use of force, but these tactics are normally reserved for actual conflicts. At one end of the lethal spectrum, is the support for insurgencies. This is an escalatory act because international norms typically discourage instigating a civil war in another sovereign territory. More lethal options include air-strikes, invasions, and land grabs, which all border on sabotage and brute force rather than coercion.

As a part of a larger coercion framework, deterrence is closely tied to compellance, as shown in Figure 2. Although not the focus of this paper, it still must be briefly defined. Freedman (1998) writes that coercion is the merging of deterrence and compellance, and that coercion is �to deter continuance of something the opponent is already doing� (p. 19). Byman and Waxman (2002) also write about the codependence of deterrence and compellance that is best demonstrated when the success of previous coercion attempts validates the coercing nation�s credibility, which then affects the ability to deter. For instance, if a nation can compel an adversary into stopping existing attacks, then that adversary might ultimately not attack in the future because it feels it is not worth it, thus leading to better deterrence.

With regard to cyber attacks, deterrence is difficult because of constantly changing technology, a diverse threat environment, attribution difficulties, the difficulty of communicating credible threats, the lack of clear thresholds, and the risk of targeting innocents with automated responses. Some of these variables also apply to cyber coercion and will be explored later in this chapter. The secrecy that surrounds cyber capabilities also makes it difficult to work with allies and partners to share new techniques and expose enemy vulnerabilities. There are some possibilities of success for cyber deterrence against nation-states because major attacks rarely happen in a vacuum and will involve other political, military, or economic goals (Kugler, 2009). Attackers may be unclear that the post-attack environment will be better or worse for them and therefore choose not to attack, which means uncertainty can be as powerful as projecting certainty in cyber deterrence (Lukasik, 2010). Ultimately, deterring intrusions into U.S. digital networks is failing because of a disconnect between U.S. cyber capabilities and any credible threat of retaliatory punishment. Therefore, at least at this time, compellance is the more pressing issue. Focusing on the compellance side of coercion can allow the U.S. to try to be proactive and use cyber operations to shape other world events and take the fight to its adversaries.

Figure 2. �The Coercion Dynamic and Policy� (from Hare, 2012, p. 131).

Schaub (1998) writes that compellance is �designed to alter the status quo� (p. 58). It is �an act that could not forcibly accomplish its aims but one that hurts the target enough to induce compliance anyways� (Schaub, 1998, p. 42). The goal is to simultaneously increase the perception of the coercer�s capabilities and will to continue while limiting the target�s capabilities just enough to influence its will not to continue. It is a blend of threats to use force and the use of limited force in connection with a threat. There is a wide spectrum of application. Thies (2003) writes that at times the relationship between threats and force is complex because initial threats of compellance can fail, yet a coercion strategy can still succeed, as demonstrated by NATO in Yugoslavia. The verbal threats and posturing by the Clinton administration were unable to compel the Serbs until the actual application of force in the form of the NATO air war. The addition of cyber as an instrument within the U.S.�s coercive toolkit will hopefully open up new opportunities not possible with just the traditional economic, diplomatic, or kinetic tools previously used. That is because even threatening destructive military force is banned by international law, but the nuances of cyber use of force have yet to be clearly defined by international norms. The U.S. can capitalize on these legal ambiguities and set precedence.

Before attempting to compel, each side must consider the �value of the target, the power that can be demonstrated, and the extent they can credibly communicate this information� (Schaub, 1998, p. 58). Schaub (1998) describes six additional tenets that need to be considered when attempting to compel an adversary, �demands, motivation, balance of capability, clarity of demands, publicness of demands, and illustrative use of force� (p. 58). The demands can include the coercive actor inducing the target to stop an action, altering or undoing an action, or inducing another action altogether. The motivation and balance of capability should take into account both the aggressor and the target to be most successful. The clarity of demands must also include what additional actions will occur if there is non-compliance. The publicness of these demands and the potential use of force act as signaling aimed at shaping the bargaining process. These tenets represent the science of compellance that must be understood before actual use. Any hope of successful application depends on understanding these factors. The art of coercion is then successfully applying them in the absence of perfect knowledge.

Due to the breadth of cyber capabilities, initial thoughts seem to favor threats over actual force for use in coercion. Using threats alone is more in line with traditional nonlethal coercion, as in theory, truly successful threats do not need to be carried out (Byman & Waxman, 2002). Cyber threats can force targets to doubt their ability to wage information warfare successfully because of the perceived threat of the coercer�s capabilities. Threats inject fear and doubt into the performance and security of a network, and then the thought of an adversary in a nation�s networks could be exploited for a coercive purpose. Just maintaining a publicized standing cyber force serves to taunt institutions (Libicki, 2011). In peacetime, this effect is not wholly debilitating as every network accepts a certain level of risk. Digital infrastructures are not constantly being shut-down at the first sign of network penetration, but these threats do drive the cyber defense industry and generate fear of ever looming cyberwar. This simply reflects the constant back and forth relationship of attacker and defender found in any domain. In wartime, however, fear and doubt are magnified because of the increased risk of death. If warfighters begin to doubt the accuracy of information on their computers, or the performance of their military system to which they have entrusted their lives, then cyber threats can create the tipping point for warfighters being unwilling to engage the enemy.

Given that there are no actual real-world examples of cyber coercion, however, there is an even stronger need for credibility and demonstration to generate the desired effect. Byman and Waxman (2002) point out that sometimes no amount of threatened force will actually compel an adversary to yield. To establish credibility, force will most likely be required. The notable cyber incidents in Estonia, Georgia, and Iran have shown that preemptive cyber aggression can be used to enact powerful effects. These incidents can create the necessary credibility and proof of concept for future uses of cyber coercion, even if they were employed differently. Whether threats or actual force, the tailorability of options found in cyber coercion allows for a wider spectrum of application than with traditional instruments. Cyber acts can incorporate elements of all other types of coercion and add unique elements of its own. The downside to this is that among peer or near-peer competitors, embracing offensive cyber capabilities could only encourage more competition and the militarization of cyberspace. This has arguably already occurred, and the U.S. now needs to figure out how to succeed and maintain an advantage in the current environment.

The amount of actual force required to coerce via cyber will undoubtedly be situationally dependent and one of the most difficult aspects of employing it. Too weak, and it can lead to embarrassment and capability loss; too strong, and it can lead to retaliation and escalation. The promising news is that the threshold for military retaliation from the use of cyber acts will likely be higher than any kinetic equivalent (Waxman, 2013). Although cyber attacks can clearly cause damage, it is arguably less when compared to that of other strategic weapons and when used by themselves would most likely only provide an irritant to the targeted nation (Lewis, 2011). Multiple examples of distributed denial-of-service (DDoS) and web defacements in the news throughout the past decade seem to support this claim. Nation-states are simply not prepared to act in response to these low level intrusions. The risk of unintended propagation also helps curb the extensive use of more powerful attacks.

The problem with low-level intrusion examples is that they do not carry any coercive weight. Liff (2012) writes that only a limited number of circumstances will be possible for cyberwarfare to be used as a tool to pursue strategic objectives. He continues that, �no examples of irrefutable effective coercive CNA exist� (Liff, 2012, p. 426). The more powerful attacks in history have provided only brute force measures during or precipitating conflict. There is a thin but distinguishable line between targeting for coercion and targeting for destruction (Cimbala, 1998). Brute force sabotage eliminates the target�s options, and coercion is built upon choices. Byman and Waxman (2002) write that, �simple pain inflicted does not translate into leverage� (p. 48). The problem is that this creates a continuum of approaches in which the coercer must find something short of total destruction but powerful enough to compel the desired behavior. Coercion is most successful when targeting what the enemy values, so �there is the risk of the most sensitive pressure point, if pressed, provokes the most dangerous response� (Byman & Waxman, 2002, p. 224). Figuring out the right balance of threats and force will require evolutionary understanding. Coercion is a process more than a singular event because conflicts with two determined parties tend to last a long time.

Power is the ability to get others to do something they would not normally do. This is a critical tenet of coercion. The power to hurt is nothing new, but thanks to technology proliferation, many people can inflict it on anyone from anywhere (Schelling, 1966). In the cyber domain, power diffusion exists due to the varied threat vectors. Nye (2010) writes that nation-states will remain the most dominant, but the delta will shrink and it will be more difficult to exert control. A reduction in power is not the same as equalization though, as nation-states will still have larger resources to mount the most dangerous types of attacks. There is a clear demarcation between the quantity and quality of actors in cyberspace. If a country wishes to exert its power to coerce via cyber most effectively, it must simultaneously attempt to defend its own gaping vulnerabilities. Nye (2010) writes of a paradox that more cyber power also creates more vulnerability. This is caused by the direct relationship between a reliance on highly technical systems and the ability to wield cyber power. A less technical country may have limited cyber capabilities but are also unable to be coerced through cyber by the U.S. Whereas the U.S., with arguably incredible cyber power, also has inherent vulnerabilities and therefore the susceptibility to cyber coercion itself. Liff (2012) suggests that the most successful use of cyber coercion will be in peer or near-peer disputes because of the ability to sustain cyber operations, the ability to bargain due to the fear of escalation, and the credibility of threats when reinforced by conventional or nuclear forces.

Although acquiring cyber capabilities has a low point of entry, effective use of cyber coercion is limited to the dominant conventional powers of today. Nation-states represent the most dangerous cyber threat. Cyberwarfare is expensive and labor-intensive, and it is unlikely that anyone who could use it to successfully coerce would be at a level less than the nation-state (Lucas, 2011). Peer or near-peer nations to the U.S. also have less interest in creating instability in the world and ruining the authority of sovereign nations; they recognize the risks of instigating and escalating major conflicts (Libicki, 2011). We further concentrate on adversaries rather than simply peers or near-peers. Coercion by cyber attack cannot be conducted against countries that the U.S. has agreements on criminal tort law because they could then sue the U.S. for any damage caused. This can also only happen for country pairs like the U.S. and China that do not have mutual extradition on cases of sabotage and product tampering. Otherwise, it would be impossible to wage offensive cyber because the costs of paying damages would be so high. This all places the emphasis on using cyber coercion at the higher end of the spectrum of conflict as shown in Figure 3, or in the case of this thesis, hopefully the avoidance of total war.

Figure 3. Conflict Spectrum (from Department of the Army, 2008, p. 2�1).

A problem with coercion is that it likely will not go according to plan. As the German military strategist Helmut von Moltke famously stated, �No plan survives contact with the enemy.�[�] Nations operate with incomplete and inaccurate information, and decision making is further short-circuited by the presence of strong emotions in any conflict. Nations are just as likely as human beings to stray from rational decision making, especially when forced to make decisions as a result of external international influence (Byman & Waxman, 2002). Schelling makes an assumption about states acting rationally. This could be perceived as a fault, but an enemy that might not seem rational is not necessarily �wholly irrational,� especially with regard to something like retaining power (Kugler, 2009, p. 325). Successful coercion will be dependent on understanding both the adversary to better predict its strategy and the strengths and weaknesses of the instrument used to coerce. Some of the important variables in the cyber domain will now be investigated.

Freedman (1998) describes some cost elements that come with coercion. The first deals with resistance and enforcement. The victim always has a say, and �mutual coercion� is likely (p. 30). The victim will surely attempt to resist and even retaliate. Coercion becomes a �trial of strength and confirms the balance of power� (Freedman, 1998, p. 29). The second involves compliance. These costs change over time, reflect the back and forth volleying, and are �attached to the eventual settlement� (p. 29). This is where cyber coercion can assist kinetic operations. Through the assistance of a cyber attack, an adversary network is not completely destroyed, but the attacker can potentially enjoy the physical freedom to maneuver undetected. The victim can then see that continuing its existing strategy will be unsuccessful and will potentially be more willing to come to the bargaining table. This compromise represents a partial success. The victim retains the choice to alter its existing strategy and continue fighting, or halt operations altogether. The attacker also retains options to increase the appropriate blend of threats and level of force to influence the desired behavior.

The actual financial costs are reflected in one example of USCYBERCOM�s budget, shown in Figure 4. According to the Washington Post, the House of Representatives approved a recent spending bill that plans to double the budget for USCYBERCOM from last year�s $212M to this year�s $447M; this represents a four-fold increase from USCYBERCOM�s inception just four years ago (Fung, 2014). The command also plans to add around 4,000 personnel over the next few years. During fiscally-constrained times and military sequestrations, this budgetary increase indicates new capabilities and the importance the nation is placing on cyber operations. While demonstrating growth, it is also important to keep in perspective that USCYBERCOM�s budget in 2012 was less than one new fighter jet (Sanger, 2012). The investment in cyber coercion can potentially allow nations the ability to exert influence in a cost effective manner.

Figure 4. �U.S. Cyber Command Annual Budget� (from Fung, 2014).

Importantly, there is no breakdown in offensive or defensive spending provided; investing in both is required since mutual coercion will likely occur. A key tenet of coercion is to find and threaten an enemy�s pressure points or areas that are important to an adversary but cannot be impenetrably defended. Because of the difficulty of completely securing a network without diminishing its functionality, cyber attacks have an initial advantage as an instrument of coercion. For instance, zero-day exploits by definition are previously unknown vulnerabilities. Thus, the cost of development, implementation, and speed of attack favors the offensive (Liff, 2012). This does not discount the value of defense. Even offensive effects can be used in a defensive manner by either preventing an adversary advantage or by maintaining an attacker advantage. The inability for cyber to be a standalone tool to change the outcome of an extended conflict also favors continued investment in defensive capabilities. Due to the sheer volume of intrusions happening on a daily basis, every organization needs strong defensive measures that are resilient and redundant to absorb the inevitable retaliation. Arquilla (2011) writes that the balance of offense and defense in the future will most likely be that of an action-reaction cycle, while Hichkad and Bowie (2012) draw parallels to air warfare weapons and countermeasures. Successful coercion is dependent on the close relationship of both.

When investigating offensive cyber advantages found in coercion, it is important to remain balanced and not fall into the �cult of the cyber offensive� (Singer & Friedman, 2014). A similar offensive obsession was taken by militaries around the world at the turn of the 20th century. Technologies like the railroad, the telegraph, and the machine gun gave the false impression that whoever could mobilize the fastest and go on the offensive would gain the advantage. This obsession ultimately drove military competition that escalated to war. Once WWI actually broke out, the very technologies that the world thought would embrace the offensive actually only favored trench warfare and the defensive. These are important lessons in the cyber domain. It is no small feat to actually launch a devastating offensive exploit. It requires a great deal of time, money, and expertise not readily available. �Neither Rome nor Stuxnet was built in a day� so the limiting factor for implementing cyber coercion will be time rather than cost (Singer & Friedman, 2014).

Members shall refrain in their international relations from the threat or use of force against the territorial integrity or political independence of any state, or in any other manner inconsistent with the Purposes of the United Nations. (U.N., 1945, 1:2(4))

This article from the U.N. Charter states that nations should refrain from the use of force, but how that relates to cyber operations is an ongoing debate. Article 2(4) does not clearly define what constitutes a use of force. It generally favors a narrower instrument-based definition of force rather than a subjective interpretation of the degree of force. Article 51 deals with the issue of an armed attack. An armed attack is what triggers a state�s right to use force in self-defense; an armed attack is a narrower subset of force. Even something that is interpreted as a use of force does not require a state to respond. For instance, many experts feel that since Stuxnet caused physical damage it would constitute as a use of force, yet Iran never openly retaliated. In general, the U.S. typically adopts a narrower interpretation of force to only include military attacks, but a broader view for the range of what qualifies for Article 51 self-defense (Waxman, 2011). Determining what actions qualify as mere irritants and what actions threaten a state�s existence in the cyber domain is even less clearly defined.

At the extremes, it is clear that certain cyber activities fit within the existing international legal frameworks. �If state-sponsored cyber activities constitute a use of force, then international law governing the use of force (jus ad bellum) and the Law of Armed Conflict (jus in bello) apply� (Foltz, 2012). The problem arises from the large gray area found in many cyber activities. For instance, how the law applies to peacetime state-sponsored cyber coercion is a more difficult question. The difficulty with coercion is determining the line between unlawful coercion and lawful pressure. Foltz (2012) writes that any use of force is typically interpreted as lying between physical harm and the economic and political instruments of coercion. Broad sweeping bans on coercion as a use of force have never been widely accepted. The U.N. Charter specifically mentions economic and political coercive instruments, and even military acts of coercion, like demonstrations and blockades, as acceptable use of force exemptions. Determining the right point to stop to using alternative measures is one of the most difficult decisions to make (Lango, 2006).

The ban on force seems to primarily be for the use of armed military violence. Violent acts do not necessarily cause more suffering than nonviolent ones, however, and are therefore not always the greatest evil. �Most economic and diplomatic measures, even if they exact tremendous costs on target states (including significant loss of life), are generally not barred by the U.N. Charter� (Waxman, 2011, p. 422). That is a weakness of Article 2(4). In today�s precise military world, is a targeted military strike really worse than long-term economic sanctions? Yet, with cyber, the non-physical domain is arguably as important as the physical one further complicating legal interpretation (Taddeo, 2012). The nature of cyber attacks and the fact we live in a highly connected world means that a nonviolent attack could actually have more detrimental consequences than a violent one. It is easier for a cyber attack to take down a financial system or power grid and cause panic than it is for the attack to inflict mass causalities. So it seems that in some instances, cyber coercion would qualify for a reasonable use of force exemption, but there is an enormous margin for abuse.

Even threatening the use of force is not allowed under Article 2(4), so using threatened force to prevent potential future hostilities makes the idea of offense-in-depth legal gray area. There will be a thin line between use of force prior to conflict and at the outset of conflict. In the digital world, it is arguable that all attacks are imminent. How does one even assess imminence when human intent is often the only thing that distinguishes legal exploitation from an illegal attack? Historical statecraft is ripe with bluffs and threats that are easy to misinterpret. �Cyberweapons would seem to be an excellent choice for an unprovoked surprise strike� (Lin, Allhoff, & Rowe, 2012). But, if nations were able to use cyber as a non-kinetic warning shot at the outset of a conflict, or a threat of more to come, to modify an adversary�s behavior without unnecessary loss of life than it seems worth permitting.

Preventative or preemptive attacks have always challenged the philosophy of Just War Theory. Arquilla (2011) writes of a cyber-paradox in which the ease of conducting cyberwar might disregard the principle of last resort in favor of the possible efficiency and morality of its use. In order to remain in accordance with Just War Theory, the question is not whether physical war should be a last resort, but rather whether cyberwarfare should be a next to last resort. A tactical military cyber attack could instill fear in the enemy soldier, and when coupled with threatened kinetic strikes for noncompliance, the cyber attack can create strategic and de-escalatory potential. Aucsmith (2012) agrees that the end goal of cyberwarfare is not to win in that domain per se, but rather to garner enough control to affect conflict in the other domains. Hichkad and Bowie (2012) counter slightly by saying throughout history some new weapons can alter the battle-space initially, but none have had the ability to prevent future war.

Cyber coercion gives the military another tool of influence, but its use will be a balance of judging the effect and the motivation behind it because of the enormous risk of abuse. Questions remain whether motivation and intent can even be proven, and whether the potential benefits to the U.S. are worth the potential risks of abuse by adversaries. Schelling (1966) writes that war is an example of who can survive pain the longest, and that technology can make that pain even worse. He was speaking in reference to nuclear weapons but with regards to cyberwarfare, it may be possible to use technology in a manner that actually avoids unnecessary suffering. This is dependent on the responsibility and motivations of the user in tailoring the offensive cyber act in a way that attempts to reduce the risk of future bloodshed. If there was a way to use cyber instead of kinetic strikes to accomplish the same goals, then why would cyber not be pursued? Denning and Strawser (2013) write that all things being equal, if a military objective can be achieved with a kinetic and non-kinetic option, the commander has a moral obligation to employ the cyber option.

An interesting option for cyber coercion is reversibility. An offensive cyber act is not beyond recall once initiated. Damage to data and programs can be rewritten over with the correct information. This is arguably the strongest evidence of the value of cyber coercion. This becomes a positive inducement for the target to submit to the pressure and be no worse off, at least physically. It can provide face-saving cooperation because an adversary can play down the cost of concessions (Byman & Waxman, 2002). This allows cyber coercion to include force with the initial threat yet not risk any permanent damage to the victim. No other coercive instrument makes such an extensive and temporary use of force possible. In fact, the most notable kinetic forms of coercion, nuclear and air-strikes have clear limited utility in anything short of war without causing war itself (Byman & Waxman, 2002). This is primarily because the fear of use for kinetic and nuclear strikes is rooted in sensitivity to casualties. If targeted responsibly, cyber attacks carry less risk of casualties. Creating reversible attacks also help the coercer with the risk of retaliation. Even if the ease of launch might encourage increased use, if the retaliatory attacks are in-kind, then at least it encourages the counter-attackers to ensure the damage to the coercer�s network would also be repairable.

Just because an attack is reversible, does not mean the temporary effects cannot be powerful, which further strengthens the coercive effect. Any initial use of force should be tied to an actual objective and clearly communicated to the target. This is why the best application for cyber coercion will be through an operational level attack against the military rather than a strategic attack against the civilian population. The target must understand why it is being targeted so it can choose to comply or resist. Shutting down a nation�s early warning radars could mean a lot of casualties if the targeted nation does not submit to the coercer�s demands and the conflict is allowed to proceed. Interfering with logistics could increase rates of disease and injury from accidents if the target does not comply quickly. By using these tactics at the outset of a conflict, the goal is to get the target nation to stop its current path and come to the bargaining table. The target nation still retains the capability to defend itself; it is just weakened. That weakness becomes temporary by including a reversibility option, which then increases the likelihood of compliance. In this way, continuing the conflict might be avoidable and represents a limited but valuable success.

A con to reversibility is that it could create a cyber �fire-and-forget� mentality. Nations could release malware with a shrug, insisting they could always �turn it off� at a later time. China seems to already be doing this with advanced persistent threat attacks targeting data exploitation. Another difficulty in repairing cyber attacks is in localizing their effects to reverse them. Cyber attacks by their nature are difficult to precisely target so reversing every area of damage could be time-consuming if an attack is not well-planned. This time delay hurts coercion because even if the target acquiesces to the demands, the time it takes to fully reverse the damage could be deemed unacceptable to the target who might then still retaliate. This could lead to unintended escalation, which will be discussed shortly. The target may also doubt that all traces were actually removed. This argues for the importance of self-attributing attacks. If a signature is applied, then it will be far easier for the coercer to prove that all traces of the attack have been removed because nothing in the target system carries the coercer�s digital signature.

There is a relationship between the strength and effectiveness of a cyber attack and the resulting level of attribution. By using �technical data, all-source intelligence, and logical inference� most major attacks should be able to be attributed (Kugler, 2009, p. 319). Even with anonymizing services, digital forensics can discern attackers given enough time (Knake, 2010). The problem is that a full blown cyberwar could happen very quickly, and indisputable attribution might take days, rather than minutes, so a country may be destroyed by the time the attack is attributed. Hare (2012) argues that unequivocal technical attribution is not required to compel because of the importance of communication. Another indispensable attribute of successful coercion is conveying credible commitment to future action. Violence is used to strengthen a message and not just for simply causing pain. By attributing the act of cyber coercion, it can signal both commitment and credibility.

It may suffice that the coercer clearly communicate to the adversary that the cyber act is in retaliation to a specified fault and that it will only stop after the adversary meets the demands, much like extortion. This is to make sure the intended target clearly understands the origination of the threat and its options. For coercion, countries that make the origin of their attacks clear will have the ability to better enable the desired effects (Rowe, Garfinkel, Beverly, & Yannakogeorgos, 2011). These are often more important than the pure military value of the target because coercion brings force and a message. This can be accomplished by attaching digital signatures to the attack malware, or even attempting to conceal them steganographically. Without the clarity that attribution brings, some effectiveness could be lost. The counterargument is that vague demands can be less humiliating for the enemy. An overtly attributed attack will be embarrassing for the target as there is a perception of being strong-armed by a more powerful nation, which might also encourage retaliation to save face. An attacker might therefore approach an adversary covertly and allow it to back down without the rest of the world clearly knowing the cause. Ultimately, attribution will be situationally dependent and will be but one of many political considerations.

Many of the creators of Stuxnet felt that it would be more valuable if attributed because Iran would realize that the U.S. could unmistakably penetrate Iranian networks and therefore send a stronger message. Sanger (2012) believes that the president, �fears acknowledging the capability creates pretext for other countries, terrorists, or teenage hackers to justify their own attacks� (p. 265). Non-state groups do not sign international treaties, let alone terrorist organizations, which all seem willing to conduct hit-and-run attacks. The absence of international norms or treaties is not unique for cyberwarfare in comparison to other new technologies. Although not for any lack of effort, the first treaty limiting nuclear testing was not signed until nearly 20 years after the invention of the nuclear bomb (Sanger, 2012). Operating without clearly defined norms will not prevent other nations from taking advantage of the opportunities provided by this domain, and so the U.S. should not be afraid to either.

Byman and Waxman�s (2002) idea of �escalation dominance� is magnified when considering cyber as the instrument of coercion (p. 30). They define this as, �the ability to increase the threatened costs to an adversary while denying the adversary the opportunity to neutralize those costs or to counter escalate� (p. 30). Since the U.S. is so vulnerable to cyber attacks, its ability to overwhelm an adversary prior to it counterattacking in-kind and exploiting U.S. vulnerabilities is unlikely. The fact that many nations, and individuals for that matter, are able to acquire these capabilities makes the task all the more difficult. That suggests that to be effective, cyber coercion will require combining the initial cyber use of force with the threat of conventional force. With regard to state relationships, Liff (2012) writes that underestimating resolve and the misperception of vulnerabilities could lead to escalation, but coupling that threat with follow-on kinetic strikes could prevent it. The U.S. has an advantage in threatening its powerful nuclear and conventional assets in case cyber acts cannot coerce by themselves. This gives the threat greater credibility. Liff (2012) only foresees an increase in protracted wars between two weak states because of the lack of strong conventional military backing. Even when an asymmetric advantage does exist for weaker states against stronger states, the weaker state might gain temporary leverage but will lack the ability to sustain it.

If kinetic threats strengthen cyber use of force credibility and possibly prevent more costly kinetic wars, the initial use of cyber force might still create opportunity for escalation to unrestricted cyberwar. Maneuvering exclusively in the cyber domain can generate extreme and debilitating effects as discussed in the legality and morality section. Non-kinetic escalation could be substantial if the victim is a highly technology-dependent nation. For instance, the U.S. might think that a major cyber attack could coerce North Korea. North Korea might then assume the following: kinetic escalation is not worth the risk because of the threatened conventional U.S. strikes, the best method of counterattacking is in the cyber domain because of known U.S. vulnerabilities, and that it has nothing to lose by unleashing its cyber counterattack in one massive undertaking prior to the U.S. exacting increased pressure. The resulting North Korean counterattack could destroy U.S. infrastructure, and the attempted U.S. coercion would not only be a failure but it would also create an even worse situation. This again suggests that cyber attacks need to have limited objectives and clearly communicated military targets to minimize escalation. It is arguable that North Korea might still respond similarly even if the U.S. only attacked its military. Therefore, a better solution would be promising in-kind retaliation through a declaratory policy at the outset of a conflict to help reduce the possibility of miscalculation while trying to maneuver exclusively in the cyber domain (Mazanec, 2009).

Playing a dangerous game of risk that capitalizes on escalation fears is also what helps de-escalate. Schelling (1966) writes that a credible threat can often initiate something that might quickly get out of hand; this risk of escalation and shared risk is a part of brinkmanship. The unpredictable nature of cyber effects lends itself to brinkmanship, and cyber attacks convey the power of the coercive threat of escalation. The point of the coercive threat is to give the adversary a choice. If the proposed conditions are not met, the threat of additional and more costly war makes the initial cyber act more powerful because of the knowledge that war could happen whether each side intended for it to happen or not (Schelling, 1966). Byman and Waxman (2002) also talk about balancing escalation. The inability to maintain cyber pressure can embolden the target, but sudden escalation and being strong at the outset creates a powerful sense of urgency. The problem is finding the perfect blend; an act that is powerful enough to be noticed, but not strong enough as to force the adversary to escalate to unrestricted cyberwar or total war.

When coercing, Schelling (1966) writes that it is critical to give, �the last clear chance� (p. 44). By doing so, a nation maneuvers in such a way that the enemy now has the initiative to act or not. It gives the enemy a way to escape and save face. Freedman (1998) concurs that nations should want a bargain and need a bargain to save face; �total compliance is an act of submission� (p. 34). If the target nation complied only out of fear of a superior force, then it is unlikely any agreement would last. Take for example the Cuban Missile Crisis. The U.S. used bargaining and demonstrative military action but also gave the Soviet Union the option for a face-saving retreat (Cimbala, 1998). The blockade was not strong enough to remove the missiles already on Cuba, but it was able to stop future shipments through threats of escalation.

A major problem is that if the enemy believes an attack will happen regardless and not in response to something it did, then it has no incentive to be cautious and will probably attempt to guarantee that it gets the first shot. Schelling (1966) writes that this is the worst position a country can be in, where both sides are, �trapped by a technology that can convert a likelihood of war into a certainty� (p. 225). A major difficulty with cyber coercion is whether it can be done without both sides thinking that cyber attacks will only work for them if they only get the jump on the other. There is also a paradox within cyberwar that due to the nature of the targets in a powerful attack, the less likely the victim will be able to communicate and control the response, which can lead to escalation and more war. Communication problems will only occur with particular kinds of cyber attacks that have a network target, and so attackers do not need to cause communication problems if they do not want to. Ensuring there is constant communications link between the two countries, in a manner similar to the red �hotline� between the U.S. and the U.S.S.R in the Cold War, is critical (Mathers, 2007). Some of this uncertainty is necessary to prompt cautiousness; the inability to accurately predict the post-attack environment creates an important sense of hesitation.

A problem with carelessly designed offensive cyber acts is unintended effects like spillage onto the general Internet. Code can also be reverse-engineered to possibly work against the attacker�s own poorly defended networks. Benefits of offensive cyber operations could be overshadowed by the risk of having one�s own network vulnerabilities exploited. Attacks may not be able to find their targets effectively, and assumed vulnerabilities could be unknowingly patched making reliable and successful malware difficult. Defensive measures like passwords, encryption, loggers, and access controls complicate precise targeting. Networks are layered with firewalls or disconnected outright, so knowing how an attack might behave in the target environment is difficult. Anything new comes with large upfront costs, and this is especially true of new software and new cyber weapons, which both have high error rates and low reliability. Even after the extensive preparation it took to develop Stuxnet, it still reached many non-targeted networks. The creators wanted to maximize the effect of the code by applying it to a �large array of centrifuges in Natanz� rather than focusing on more discreet targeting; �the worm then didn�t recognize when its environment changed from secret to Internet� (Sanger, 2012, p. 204). The U.S. lost some credibility when it now tries to complain about other nations snooping around its own networks. Yet, the willingness to release Stuxnet knowing that no code is perfect and that collateral damage is always possible could also be interpreted as a signal of resolve, which could bolster future coercive threats.

Another problem for adopting cyber coercion is what Byman and Waxman (2002) call an asymmetry of constraints, in that some countries are more constrained by legal norms than others. Less-powerful countries will always be more interested in upsetting the status quo and trying to capitalize on a means of attack that the more powerful are quite vulnerable against. Even the smallest of conflicts to the U.S. might seem overwhelming to certain adversaries, and so they might be more willing to cast aside international law at the outset. The U.S. could also use its technological advantage to create a new form of unilateralism and unless properly managed could invite a cyber arms race (Sanger, 2012).

Designing a strategy to account for all the different nations and even the fluctuations within the same country is another major difficulty. It is what makes coercion so hard and why creating blanket foreign policies impossible. Based on recent military history, an adversary could conclude that the U.S. is unwilling to escalate beyond air-strikes. Therefore, an adversary must simply wait for the strikes to subside and then continue its current path again. This view might also translate to the way an adversary could react to cyber coercion. Air-strikes, sanctions, diplomatic pressure, and other forms of coercion can be interpreted as low risk, low commitment measures that are only used when policy-makers want to demonstrate they are not ignoring the problem. These low-cost tools are then sometimes interpreted as only being used when U.S. commitment is weak, which therefore weakens the overall coercive credibility (Byman & Waxman, 2002). However, such limited uses of force also flow from calculations of efficiency: why use more than needed to complete the desired effect?